I’ve never fully trusted perfect infrastructure. Not because I think people are dishonest, but because I’ve seen how things actually work when you’re the one building it.

Most companies have a status page. It tells you what is happening right now. But it almost never tells you what just happened. And that’s the part that matters. Because behind stable systems, there is always something — something that didn’t behave as expected, something that had to be investigated, or something that looked worse than it actually was. If everything always looks clean from the outside, you’re not seeing the full picture.

When we started building WAYSCloud, this stopped being theoretical. It became a real question I had to answer: what do we actually do when something goes wrong? Not in a pitch deck. Not in theory. But in practice.

I went back and forth on this. More than once. With myself — and with the team. Because it’s easy to talk about open source, no vendor lock-in and transparency. But that mostly applies to architecture. Not to reality.

The real question is operational. What happens when something breaks? Or behaves in a way you didn’t expect? Or turns into something that is simply uncomfortable to explain? That’s usually where things stop.

And that’s where this got uncomfortable for me as well.

Because if we were actually going to be transparent, we couldn’t just show the clean parts. We couldn’t just show what worked as expected. We would have to show the things that looked serious at first, the things that turned out to be nothing, and the things we didn’t get right immediately.

And yes — that can look bad.

One of the cases we chose to publish could easily have stayed internal.

It involved a high-severity alert related to potentially illegal content. At first glance, it looked serious. The kind of situation most companies would never expose publicly.

But after investigation and formal review, it turned out to be a false positive triggered by test data.

No actual content. No breach. No impact.

You can read the full case here: False positive CSAM alert involving test data and external IP.

What actually happened was this:

During development of a monitoring system, test data was manually inserted into a production database to simulate detection scenarios. The data wasn’t clearly marked as test data, and it wasn’t removed afterwards.

At a later point, one of these entries triggered a high-severity alert through an external detection service. The alert appeared as a confirmed match and was treated as a real incident.

To make things worse, this specific entry contained a real external IP address, not a reserved test address. That meant an unrelated third party could, in theory, be incorrectly associated with something extremely serious.

We investigated it immediately.

We verified that no real files, uploads or user activity existed. We confirmed that the IP had no interaction with our systems. We validated everything across logs and storage. We identified the root cause. We involved relevant authorities, including Kripos, and clarified the situation fully.

Nothing actually happened.

But it could easily have looked like it did.

Most companies would have stopped there. Internally resolved, closed, and invisible to the outside.

We didn’t.

We documented it.

Because that situation is real. It shows how systems behave. How alerts are triggered. How something can look critical — and then not be. And that’s not unique to us. It happens everywhere. The difference is that most of it is never shown.

Let’s be honest: this comes with a cost.

This is not always the smartest business decision in the short term. It can be misunderstood, taken out of context, or simply make things look worse than they are. And yes — it can have commercial downsides.

But I still chose it.

Because if you talk about control, transparency and trust, then that has to include the operational reality as well. Not just the architecture diagrams.

For me, trust in infrastructure is not built on the idea that nothing ever goes wrong. It’s built on how things are handled, how decisions are made, and whether someone is willing to explain what actually happened afterwards — not just the clean version.

Perfect systems don’t exist.

Only systems that are understood, handled, and — when it matters — explained.

Even when it’s uncomfortable.

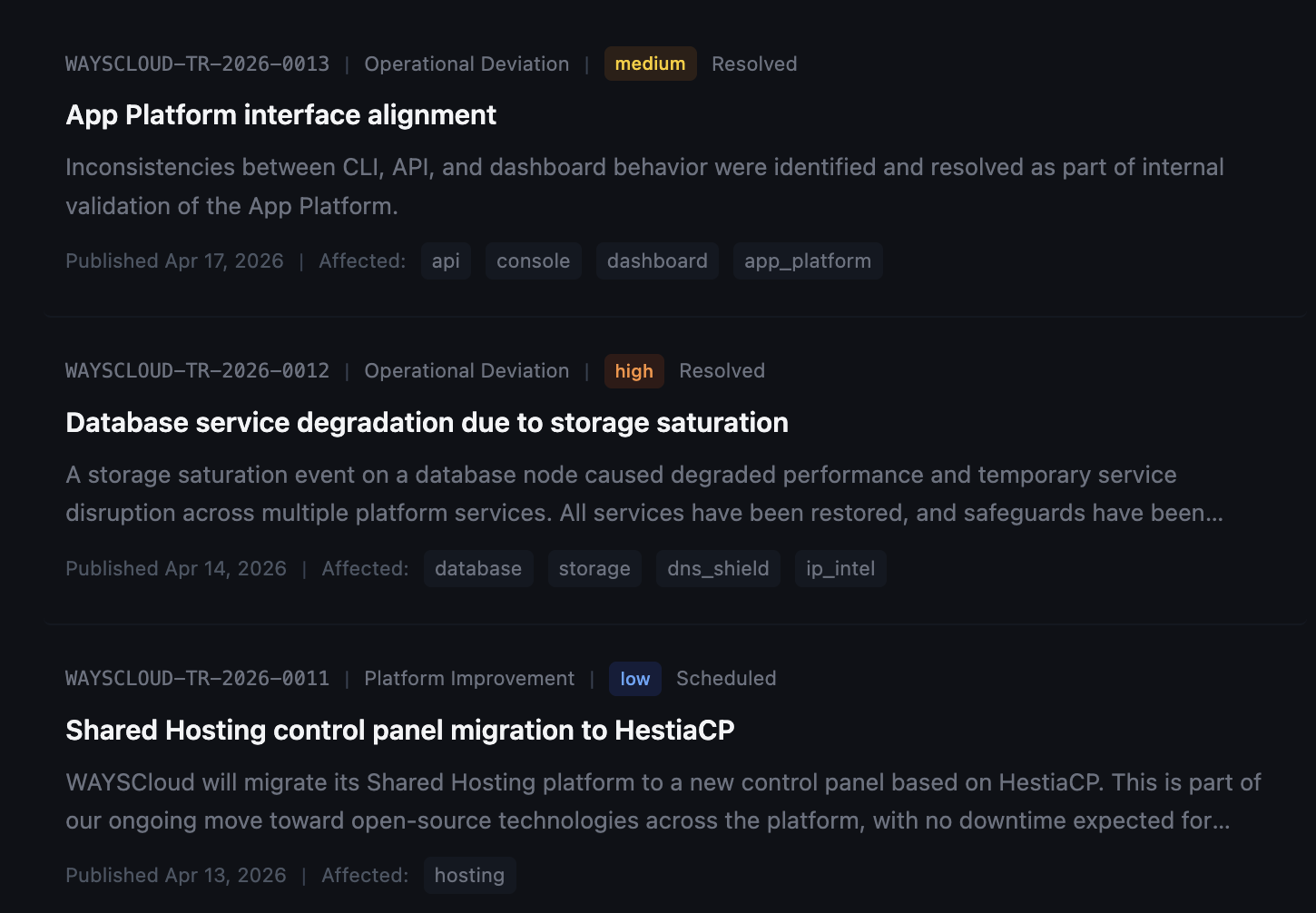

If you’re interested in how this looks in practice, more cases are documented in the Trust Center.